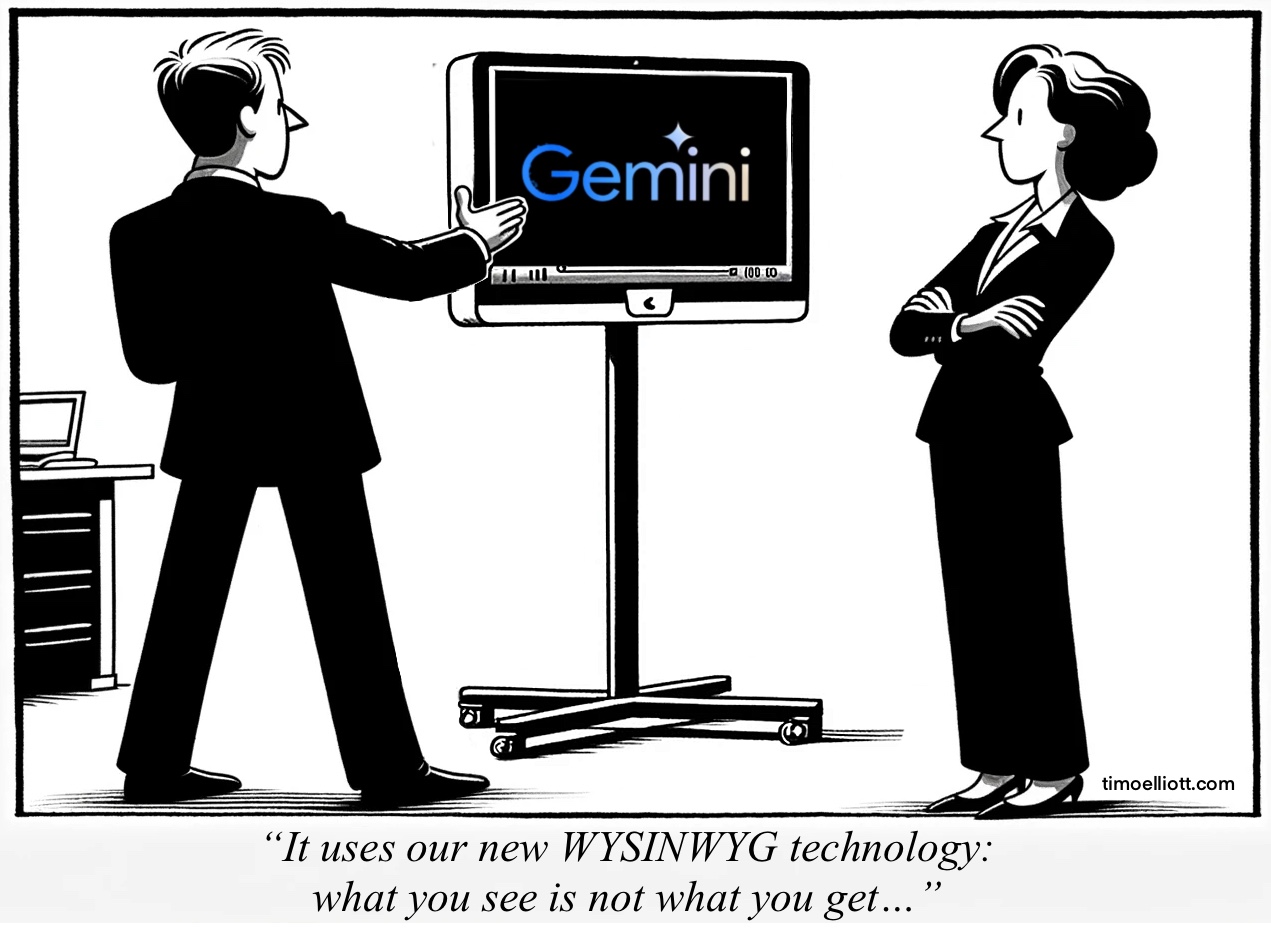

Google has unveiled the latest Gemini AI model. But it apparently runs on new WYSINWYG technology (What You See Is Not What You Get)…

One of my maxims has always been “if the reality is better than the marketing, you’re doing marketing wrong”—but there are limits, and the apparently-somewhat-faked launch video of the new Gemini Ultra model seems to have gone beyond them, generating significant backlash:

“Google admits Gemini AI Demo was at Least Partially Faked: This is just embarrassing” https://lnkd.in/euamvWyS

To be clear, this does seems to be a very capable model, the first to really challenge the complete dominance of OpenAI’s GPT-4. And I’ve heard people argue that this is just a question of timing — for example, taking a video and extracting single frames from it is known technology, so maybe Google just felt that what the video depicts in realtime is an acceptable, short-term extrapolation of what the model can do.

Gemini Ultra’s multi-modal approach, combining text, sound, and image into a single model (rather than suboptimally bolted together like GPT-4 and DALL-E) should be very powerful.

But most of us will not be able to test it until next year. In the meantime the less capable Gemini Pro model is now used in Bard, but that seems to be equivalent to ChatGPT-3.5 (and so a long way behind v4)

In many ways, this was always Google’s race to lose, because of their incredible access to the world’s data. I expect them to be one of the clear leaders in the space going forward.

But their biggest challenge, and the reason they were probably late to the game despite being a machine learning pioneer, is their business model: these technologies could dramatically undermine the advertising business that they rely on for >80% of their revenue.

What do you think?

Comments

2 responses to “Gemini: WYSINWYG?”

I don’t get the conclusion. Why having gigabytes of AI produced content would interfere with the ads business ? On the opposite, the more content you have, the more advertising supports you get ?

Herve, in the long time I think you’re right. But in the short term it upturns the apple cart and existing silos and revenue mechanisms. Today, they make money from the ads displayed on web pages, and Google’s search as been getting steadily worse as it prioritizes revenue over customer experience (see Corey Doctorow’s discussions of “enshittification”. With conversational interfaces that “just know the answer” a lot of those crappy web pages can simply be avoided. All of the dumb SEO tricks will fundamentally change in ways that aren’t clear. People are already turning in droves to the new AI-powered query engines like Perplexity AI….