I know it’s a strange question.

But as with any hot topic, there are a lot of different terms thrown around that can mean slightly different things and lead to confusion.

Here’s my attempt to clear things up.

The field of artificial intelligence (AI) research dates back until 1956, but the term has never referred to a specific type of technology.

Rather, it’s a “sociotechnical construct” — a term we use to indicate machine capabilities that can solve complex tasks that were until recently only possible by humans.

So AI is an umbrella term that encompasses a lot of different technologies. Most of the current interest in AI involves machine learning — algorithms that can learn on their own from data sets without having to be explicitly programmed.

The power of machine learning has leapt exponentially because of the increased availability of data, the plummeting cost of information storage, and the ability to access massive calculation power using cloud computing and more powerful processors.

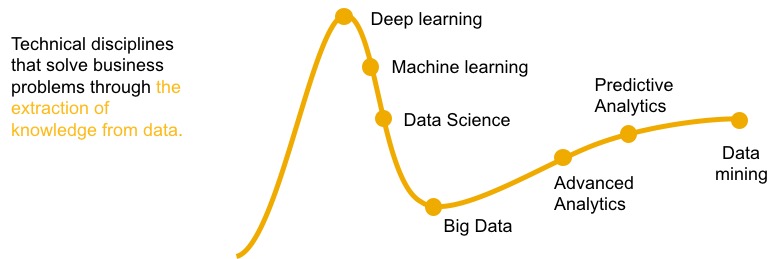

Machine learning includes automated forms of the kinds of statistical algorithms you may have learned at school. These have long been used inside organizations, and have typically been referred to as data science, data mining, predictive analytics, or advanced analytics.

But because these terms have been in use for a long time, they are typically avoided by analysts and vendors trying to emphasize what is different about today’s AI opportunities.

One of the areas where there has been the most recent progress is deep learning. It’s a specific type of machine learning in which the several layers of neural networks mimic the way our own brains work. It’s the key technology behind recent breakthroughs in image tagging, voice transcription, automatic translation, and more.

Cognitive computing is another term that is typically used for the most sophisticated types of AI technology that try to mimic human reasoning. But its precise meaning isn’t clear, and many analysts and vendors avoid the term because the term is so strongly associated with IBM.

The market seems to have settled into the following rule of thumb: if you’re talking about the business, consumer, or personal impact of these new technologies, you use the term Artificial Intelligence. But if you’re talking about the details of the technology to be implemented, you use the term Machine Learning.

Either way, the precise definitions should never get in the way of the primary goal of getting more value from your data. You have business needs, and there are now new technologies available that can help. Whenever somebody tries to suck you into a nomenclature war and insist that they have the only “true” definition of one of these terms, try to bring the subject back to the concrete situation you are working on.

Comments

One response to “What is Artificial Intelligence Called?!”

Great post, Timo! Being a product manager for SAP Predictive Analytics, I can only agree that we are part of the wider Machine Learning family. Maybe we should rebrand ourselves 🙂