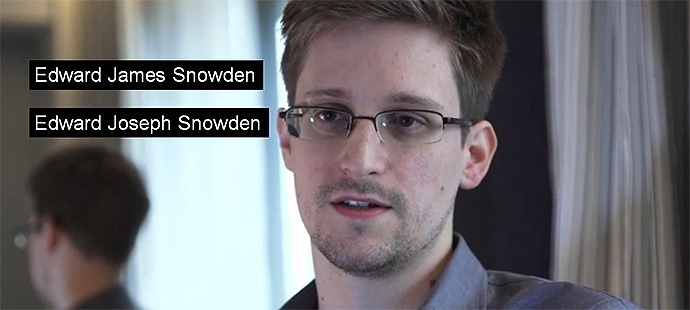

Even basic data quality problems can have big consequences. The National Security Agency learned this to its cost recently when the US applied for leaker Edward Snowden’s extradition from Hong Kong.

The extradition paperwork suffered from two classic data quality problems:

- Incorrect data — Snowden’s middle name was incorrectly noted as “James” instead of “Joseph”;

- Missing data — the passport number was not included.

These errors meant the order “did not fully comply with the requirements” of the Hong Kong authorities, giving them an excuse to turn down the request and avoid a unwanted diplomatic confrontation.

Snowden took the chance to slip away to the international transit lounge of a Moscow airport. And US diplomatic embarrassment didn’t end there, as more poor information resulted in Bolivian President Evo Morales’ plane being grounded because various European states had been assured that Snowden was on aboard.

The delicious irony of the NSA, whose massive databases on millions of Americans were revealed by Snowden, being unable to correctly spell the name of its own employee went largely uncommented.

Why? Probably because such errors are so commonplace that they are taken for granted. The NSA presumably has the right name it its systems, but this information was warped at some point as it was manually transferred onto the extradition document by a rushed official.

Equivalent situations happen every day in companies around the globe. Data quality problems are ubiquitous, and can have far-reaching consequences that aren’t always foreseen.

The moral of the story is that poor data quality is never a technical problem. It is always the result, directly or indirectly, of human error.

Thankfully, new tools are available that can help business people and IT organizations collaborate to fix data quality problems – using analytics to improve analytics.

Comments

One response to “The NSA’s Data Quality Problem”

[…] Facebook • Twitter • Delicious • LinkedIn • StumbleUpon • Add to favorites • Email • […]